Shadow AI: The Enterprise Data Leak You Can't Ignore

Artificial intelligence has fundamentally changed how enterprises operate, yet this transformation introduces an unprecedented vector for corporate data exfiltration. When a software engineer pastes proprietary source code into a consumer-grade chatbot to optimize a function, the organization loses control over its intellectual property.

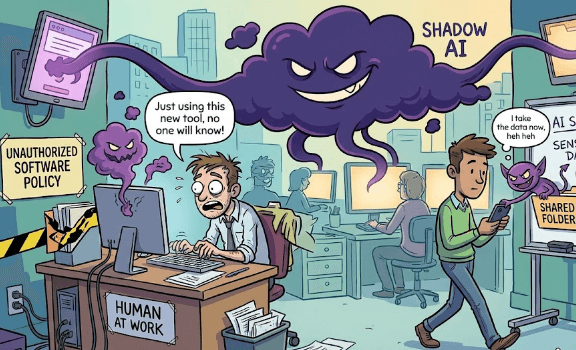

This phenomenon is known as Shadow AI, and it represents a growing threat to production systems globally. Employees are rapidly adopting unsanctioned AI tools to streamline daily tasks, completely bypassing established corporate governance and identity access controls. The data perimeter is no longer defined by physical networks or traditional gateways. Instead, it has dissolved into the cognitive workflows of individual employees interacting with external large language models.

Link to section: Understanding the Shadow AI CrisisUnderstanding the Shadow AI Crisis

The industry has spent two decades grappling with Shadow IT - the unsanctioned deployment of cloud infrastructure and software platforms. Shadow AI presents a profoundly different risk profile. Shadow IT involves the decentralization of infrastructure, whereas Shadow AI involves the unregulated democratization of complex data processing.

In today's corporate environment, the primary danger lies not merely in using unvetted applications, but in the highly sensitive nature of the data being supplied to them. Generative AI thrives on rich context. To get a high-quality output, users must provide high-quality input. This operational reality encourages employees to upload confidential legal documents, financial projections, and proprietary algorithms directly into public interfaces.

The velocity of this adoption is staggering. Telemetry data from global organizations shows an explosive acceleration in unsanctioned AI access. Traffic to generative AI domains surged by 50% between February 2024 and January 2025, reaching 10.53 billion individual visits, with 80% of this access happening directly through web browsers.

Link to section: The Staggering Statistics of Unsanctioned AIThe Staggering Statistics of Unsanctioned AI

The gap between workforce AI adoption and enterprise security readiness has created a vast regulatory vacuum. The data paints a clear picture of experimentation prioritizing efficiency over confidentiality.

| Adoption and Exposure Metric | Observed Statistical Value | Security Implication |

|---|---|---|

| Personal Account Usage | 68% of employees use free-tier AI tools via personal accounts | Bypasses all corporate identity and access management controls |

| Sensitive Data Input | 57% of employees admit to entering sensitive information into AI | Direct exfiltration of intellectual property without malicious intent |

| Unauthorized Sharing | 43% deliberately share sensitive work information without permission | Systemic failure in employee education regarding data classification |

| Lack of Governance | 63% of organizations operate without formal AI governance policies | Legal and compliance exposure amplified due to zero internal auditing |

| Blindness to Data Flows | 86% of organizations remain entirely blind to AI-related data flows | Incident response teams cannot calculate the blast radius of a breach |

The average enterprise unknowingly hosts traffic to approximately 1,200 unofficial applications. Tracking systems currently monitor over 1,550 distinct generative AI SaaS applications, representing a near-quintupling of available platforms since early 2024. On top of that, more than 6,500 active generative AI domains have been observed, highlighting a secondary risk of domain spoofing. Threat actors rapidly register domains mirroring legitimate AI services to harvest sensitive prompts and capture corporate credentials.

Link to section: Real-World Shadow AI Data LeaksReal-World Shadow AI Data Leaks

The theoretical vulnerabilities of Shadow AI have materialized into severe, highly publicized corporate incidents. These cases demonstrate that the risk transcends industry boundaries, affecting manufacturing, finance, technology, and healthcare sectors alike.

Link to section: The Samsung Source Code IncidentThe Samsung Source Code Incident

The watershed moment for Shadow AI awareness occurred within Samsung's semiconductor division. In an effort to boost engineering productivity, Samsung temporarily permitted the use of public generative AI platforms. Within a mere 20-day window, the organization experienced three separate critical data leaks.

In the first instance, an engineer pasted highly confidential proprietary source code directly into a chatbot interface to identify syntactical errors. A second employee uploaded a different segment of code requesting algorithmic optimization. A third employee recorded an internal executive meeting, transcribed the audio, and uploaded the entire transcript to generate meeting minutes.

Because the AI platform used inputs to continuously train and refine its models, Samsung's proprietary data was irrevocably absorbed into a public neural network. The organization immediately lost control of its intellectual property, prompting a strict limitation of upload capacity to 1024 bytes per person, followed by a total ban on generative AI tools across the corporate network.

Link to section: The Financial Sector ResponseThe Financial Sector Response

The financial sector, bound by stringent regulatory frameworks, experienced parallel crises. Amazon executives issued urgent internal directives after discovering that responses generated by public AI chatbots closely mirrored their own internal, highly confidential data. This algorithmic mimicry led security teams to conclude that employees had been utilizing the tool to process sensitive corporate documentation.

Following this, major financial institutions including JPMorgan Chase, Bank of America, Citigroup, Deutsche Bank, Wells Fargo, and Goldman Sachs rapidly instituted sweeping bans on external AI platforms. These institutions recognized that the unauthorized processing of client financial data through unvetted third-party algorithms constituted an immediate violation of privacy regulations.

Link to section: The Financial and Regulatory ImpactThe Financial and Regulatory Impact

The deployment of unsanctioned artificial intelligence doesn't merely increase the probability of a security incident. It significantly amplifies the financial devastation when an incident occurs.

According to the IBM 2025 Cost of Data Breach Report, the global average cost of a standard data breach stands at approximately $4.07 million. However, organizations exhibiting high levels of Shadow AI utilization experience an average breach cost of $4.74 million. This disparity indicates that unmanaged AI introduces an average breach premium of roughly $670,000 per incident.

Link to section: Drivers of the Financial PremiumDrivers of the Financial Premium

Several compounding factors drive this financial penalty:

- Prolonged Detection Timelines: Shadow AI breaches require an average of 247 days to detect. This is significantly longer than standard network breaches, allowing data exposure to compound silently over many months.

- Elevated Data Sensitivity: Breaches linked to Shadow AI disproportionately compromise Personally Identifiable Information (65% exposure rate) and critical Intellectual Property (40% exposure rate).

- Total Absence of Access Controls: In organizations reporting AI-related breaches, 97% completely lacked proper AI access controls, rendering containment efforts exponentially more expensive.

Beyond external breaches, the internal economic drain is substantial. The annual cost of insider risk has escalated to an average of $19.5 million per organization. Crucially, 53% of this expenditure is driven not by malicious corporate espionage, but by non-malicious actors engaging in negligent behaviors primarily associated with Shadow AI utilization.

Link to section: Regulatory Non-Compliance and FinesRegulatory Non-Compliance and Fines

The legal landscape in 2026 actively punishes poor AI governance. The General Data Protection Regulation (GDPR) Article 35 mandates rigorous Data Protection Impact Assessments for AI systems processing European Union citizen data. Because Shadow AI inherently bypasses all internal auditing, organizations cannot possibly fulfill these requirements.

This isn't just a theoretical legal debate. In 2024, a major Madrid-based financial institution faced an $8.9 million fine for utilizing unapproved artificial intelligence systems in its loan approval workflows. Organizations using content generated by unsanctioned tools also run severe risks of copyright infringement. Telemetry indicates that 29% of AI-generated marketing content contains traceable elements of proprietary or copyrighted data belonging to external entities.

Link to section: How Shadow AI Evades Traditional SecurityHow Shadow AI Evades Traditional Security

Understanding the mechanics of Shadow AI requires analyzing the specific vectors through which data exits the enterprise environment. The failure of traditional security architecture to mitigate these vectors is rooted in the technological evolution of the web browser.

The modern browser has effectively become the enterprise operating system. Legacy Data Loss Prevention (DLP) systems were engineered for an era of explicit file transfers, email attachments, and unencrypted internal network traffic. They operate by inspecting network packets for recognizable data signatures.

Link to section: The Browser Blind SpotThe Browser Blind Spot

Browser-based AI workflows neutralize legacy DLP entirely. Consider the mechanics of modern data exposure:

- No files to scan: Interaction consists of continuous, fragmented text strings typed or pasted directly into dynamic web applications.

- Asynchronous uploads: Uploads execute within complex JavaScript forms rather than standard FTP protocols.

- Encrypted sessions: Because these sessions are secured via robust Transport Layer Security (TLS), network-layer inspection appliances are completely blind to the payload content.

From the perspective of a legacy network sensor, an employee uploading the source code for an unreleased flagship product to an AI chatbot appears structurally identical to an employee typing a benign web search. In a single month, security telemetry logged 155,005 explicit copy attempts and 313,120 paste attempts into unsanctioned generative AI interfaces. At the exact moment data is pasted into the prompt window of a public model, the information irreversibly exits the enterprise perimeter.

Link to section: Advanced Evasion: Malware and AI APIsAdvanced Evasion: Malware and AI APIs

While the primary threat vector remains employee negligence, sophisticated threat actors have recognized the massive volume of unmonitored LLM traffic. They've adapted their methodologies to exploit this blind spot.

Advanced threat hunting telemetry recently exposed new strains of malware utilizing legitimate large language model API endpoints to establish Command and Control (C2) infrastructure. Traditionally, malware communicates with remote servers using suspicious, newly registered domains. Modern evasion tactics, however, route C2 traffic directly through the official APIs of highly trusted AI providers.

In these attacks, the malware establishes an outbound connection to an authorized AI service. It then transmits a seemingly benign prompt containing Base64-encoded command strings. The AI processes the prompt and returns an encoded response dictated by the attacker, effectively issuing system commands or receiving exfiltrated data. Because the traffic flows over TLS-encrypted sessions directly to trusted IP addresses owned by major cloud providers, standard perimeter defense mechanisms classify the activity as safe, sanctioned AI interaction. This kind of indirect prompt manipulation makes traditional security tools nearly useless against these attack vectors.

Link to section: How to Identify Shadow AI in the WorkplaceHow to Identify Shadow AI in the Workplace

To combat these multifaceted risks, organizations must implement sophisticated detection architectures capable of analyzing behavior at the endpoint, the browser, and the network API level. You can't secure what you can't see.

Link to section: Deploying Cloud Access Security Brokers (CASB)Deploying Cloud Access Security Brokers (CASB)

A primary mechanism for surfacing unsanctioned AI application usage is deploying a CASB. A properly configured CASB provides exhaustive visibility into SaaS and API activity across the corporate environment. It detects applications operating outside approved procurement inventories, flags covert data transfers routing to known generative AI platforms, and identifies employees utilizing personal credentials to access AI systems.

Link to section: Advanced Traffic AnalysisAdvanced Traffic Analysis

CASB solutions must be augmented with deep traffic analysis. Traditional firewalls fail to interpret complex API payloads, often categorizing a connection to an external vendor as legitimate web traffic.

Traffic analysis transcends simple destination logging by identifying anomalous, bursty traffic patterns. These erratic transmission spikes correlate strongly with LLM inference requests originating from unauthorized IP addresses or unmanaged devices. By establishing a baseline of normal network rhythm, anomaly detection systems can flag sudden, sustained data uploads, preventing massive data exfiltration events.

Link to section: Browser-Native TelemetryBrowser-Native Telemetry

Relying solely on network logs isn't enough when data manipulation occurs entirely within the DOM of a web page. Organizations need browser-native detection capabilities that intercept text inputs and file uploads at the precise moment of user interaction. By parsing the metadata of browser sessions, security teams can differentiate between sanctioned corporate AI usage and unapproved generative features embedded within trusted platforms.

Link to section: Securing AI Workflows: Governance vs. ProhibitionSecuring AI Workflows: Governance vs. Prohibition

The immediate, reflexive response of corporate leadership to the Shadow AI crisis has historically been absolute prohibition. Roughly one in four organizations initially responded to the generative AI surge by enacting comprehensive network bans.

However, extensive telemetry data confirms that prohibition is a catastrophic failure as a security strategy.

Link to section: The Failure of Blanket BansThe Failure of Blanket Bans

Banning AI tools doesn't extinguish workforce demand. It merely drives the behavior underground, transforming visible risk into invisible, unquantifiable danger. An MIT study revealed that while only 40% of companies have purchased an official enterprise LLM subscription, workers from over 90% of companies surveyed reported regular use of personal AI tools for work tasks.

Research consistently indicates that nearly half of all employees continue utilizing personal AI accounts even after strict organizational bans are officially communicated. When IT departments mandate blanket bans, employees resort to accessing AI via personal mobile devices, utilizing virtual private networks, or installing hidden browser extensions. Attempting to maximize security through prohibition paradoxically creates a hostile threat environment that's harder to monitor.

Link to section: Establishing Dynamic AI GovernanceEstablishing Dynamic AI Governance

The only viable strategic posture for the modern enterprise is the transition from absolute prohibition to governed, safe enablement. Organizations must develop explicit acceptable use policies that clearly define permissible use cases while unambiguously identifying data classifications strictly prohibited from external processing.

Employee education serves as a primary deterrent. Rather than issuing abstract punitive threats, security teams should proactively conduct awareness workshops illustrating the exact mechanics of data leakage. Sharing real-world examples resonates far more effectively with the workforce than esoteric compliance mandates. For a deeper look at how attackers exploit AI systems through crafted prompts, our post on prompt injection attacks covers the fundamentals.

Link to section: Preventing Shadow AI Leaks with LockLLMPreventing Shadow AI Leaks with LockLLM

While policy and education form the foundation of AI governance, technological enforcement mechanisms are required to ensure compliance at machine speed. LockLLM provides a comprehensive enterprise AI proxy and browser extension architecture capable of intercepting, analyzing, and neutralizing risks in real-time.

LockLLM functions as a centralized, secure conduit situated between the corporate workforce and the vast ecosystem of external LLM providers. By routing AI requests through a unified proxy, organizations gain total visibility and granular control over every prompt executed within the enterprise.

Link to section: Implementing the LockLLM AI ProxyImplementing the LockLLM AI Proxy

The technological efficacy of the LockLLM gateway relies on highly specialized, model-driven detection engines. Unlike basic keyword filters easily bypassed by creative prompting, LockLLM utilizes dedicated neural networks trained to detect real-life attack patterns. In rigorous benchmark testing, the proprietary detection model achieves an average F1 score of 0.974, significantly outperforming generic open-source classifiers.

The proxy intercepts traffic, applies real-time risk scoring, and returns a confidence score in milliseconds. Based on these dynamic risk signals, the system automatically executes predefined actions. It can allow the prompt, flag it for administrative review, block it entirely, or actively sanitize the payload by redacting sensitive information.

// Route internal application traffic through LockLLM proxy

import { LockLLM } from '@lockllm/sdk';

const lockllm = new LockLLM({

apiKey: process.env.LOCKLLM_API_KEY,

proxyMode: true,

// Enforce enterprise data protection policies

policies: ['redact-pii', 'block-source-code', 'prevent-prompt-injection']

});

async function safeGenerateResponse(userPrompt: string) {

// LockLLM intercepts the payload, applies models, and redacts sensitive data

// before forwarding it to the target LLM provider

const response = await lockllm.chat.completions.create({

model: 'gpt-4',

messages: [{ role: 'user', content: userPrompt }],

user: 'internal-employee-id'

});

return response;

}

This architecture ensures that latency-sensitive applications remain highly performant. LockLLM maintains sub-100 millisecond scanning latency, optimizing inference speeds while simultaneously utilizing response caching to reduce token usage and lower overall operational costs.

Link to section: Enforcing Policies via the Browser ExtensionEnforcing Policies via the Browser Extension

To address the specific vulnerabilities of browser-based data exfiltration, LockLLM offers a specialized, centrally managed browser extension. This extension operates directly within the DOM, automatically intercepting messages before they reach consumer AI services like ChatGPT or Claude.

This eliminates reliance on manual copy-paste detection. Through organizational management, IT administrators deploy the extension via Mobile Device Management (MDM) or Group Policy, enforcing encryption policies and defining custom entity patterns for internal proprietary data.

When an employee attempts to paste sensitive project code into a web interface, the extension automatically redacts the information. When the AI returns a response containing the redacted placeholder, the extension securely de-anonymizes the data locally. It displays the original data to the user with specific cryptographic verification badges. This approach ensures that employee productivity remains entirely uninterrupted while corporate data never touches external servers in plaintext.

Link to section: Best Practices and Strategic TakeawaysBest Practices and Strategic Takeaways

The rapid, unauthorized adoption of generative artificial intelligence by the global workforce has fundamentally compromised traditional data perimeters. Organizations must adopt proactive measures to secure their environments against Shadow AI.

To maintain security without stifling innovation, security teams should implement the following best practices:

- Audit current utilization: Deploy CASB and browser discovery tools to map exactly which generative AI platforms your workforce currently accesses.

- Abolish blanket bans: Recognize that prohibition fails. Focus on establishing secure, sanctioned pathways for AI utilization.

- Deploy AI-native DLP: Traditional network sensors can't inspect asynchronous browser workflows. Implement solutions designed specifically for generative text interactions.

- Centralize API traffic: Route all programmatic LLM interactions through a dedicated security proxy to detect prompt injection, malware payloads, and automated data exfiltration.

- Implement real-time redaction: Utilize managed browser extensions to automatically sanitize sensitive data before it leaves the endpoint.

For a comprehensive approach to securing your AI architecture, review our integration guide or explore the advanced features of the LockLLM dashboard. By acknowledging the reality of AI adoption and deploying targeted technological enforcement, organizations can harness the productivity benefits of artificial intelligence without sacrificing their intellectual property.